Open Peer Review: Collective Intelligence as a Framework for Theorizing Approaches to Peer Review in the Humanities

by Jenna Pack Sheffield

published 20 June 2013

Introduction

Traditional, double-blind peer review is an established method of ensuring that scholarship is properly vetted by scholars in a given discipline. As such, an author’s appearance in peer-reviewed journals has become the standard for tenure and promotion decisions. While this process has many supporters, scholars have begun to argue with more frequency that this conventional approach to accepting or rejecting scholarly texts is biased, conservative, and subjective (Shatz; Mahoney; Godlee). Due, in part, to these problems and owing to emerging digital technologies, some individuals have begun to advocate for open peer review, and many of those advocates argue for abolishing blind review altogether. This article takes a moderate approach, balancing suggestions for when open peer review can benefit scholarship in the humanities, while offering important concerns authors and editors must consider before deciding to implement the process.

In this NANO note, I focus on online commenting functions and how they have been—and can be—used for open peer review to help improve the quality of an author’s scholarly work and change the way publishers go about their peer review processes. While open peer review is not necessarily digital, digital technologies allow for a broader range of participants and faster dissemination of knowledge, which is why this article focuses on online open peer review. I focus on commenting functions because they are becoming the niche technology through which open peer review is occurring in digital spaces. In addition, commenting functions allow readers, authors, and editors to interact with each other within the space of the text, an action that has unique affordances for certain types of publishers and authors, depending on the goals they have for their texts. In this note, I will start by discussing Pierre Levy’s philosophy of collective intelligence as the theoretical framework upon which I am building my discussion of open peer review. Levy’s theory can help scholars in the humanities reframe notions of peer review. I will define online open peer review and then explore some of the problems inherent in trying to develop an open review process, along with possible approaches to counteracting these problems. I will discuss how open peer review can play a role in the humanities specifically, suggesting the types of publications and methods that are perhaps best suited for open review.

Collective Intelligence: A Framework for Understanding Open Peer Review

According to Pierre Levy, collective intelligence is a form of “universally distributed intelligence” (13). It is “coordinated in real time” and “results in the effective mobilization of skills” (13). The first premise, that collective intelligence is a form of universally distributed intelligence, is supported by Levy’s well-known claim that “no one knows everything, everyone knows something, all knowledge resides in humanity” (14). While Levy says that there is little doubt that intelligence is universally distributed, he argues that there are not often spaces that form concrete realizations of this intelligence. This is where the idea of real-time coordination emerges. He says that at some point, the coordination of real-time intelligence must occur through the use of digital technologies. According to Levy, “new communications systems should provide members of a community with the means to coordinate their interactions within the same virtual universe of knowledge” (14). This system, or virtual universe, enables members of “delocalized communities” to interact. The goal of collective intelligence is to enrich the intelligence of individuals rather than to contribute to what he calls the “cult of fetishized communities” (13). What Levy means here is that collective intelligence is not meant to be a totalitarian project—the intelligence of the group does not result in blind acceptance of one ideal, and the group knowledge that results stems from the divergent ideas of diverse individuals. The knowledge of the group, in the same vein, contributes to the knowledge of the individual.

Knowledge communities formed around the notion of collective intelligence can serve as an excellent framework for viewing the potentials of open peer review. Levy’s theory can be applied to open peer review through commenting features because these comments are real-time acts that encourage participants, whose knowledge is universally distributed, to hold conversations in the same “virtual universe” in order to enrich the overall intelligence of the group and the individuals who are part of the group. Yet, there are too many gaps in Levy’s definition for it to apply to every situation. For instance, how do we define “participants” in open peer review? Levy suggests that everyone—all of humanity—has knowledge to contribute; however, would a renowned Shakespeare scholar necessarily want a physics professor to have a say in the fate of her article? I will discuss these gaps below, after first providing a more thorough definition of open peer review.

Open Peer Review: What Is It?

While open peer review is not a new topic in the scholarship on peer review, it often lacks a consistent definition because it has been practiced in many diverse instantiations. The most general definition of open review has been articulated by David Shatz, who wrote the first book-length work on peer review in the humanities: Peer Review: A Critical Inquiry (2004). He defines it as “review by the scholarly community at large, instead of a few anonymous referees along with an editor or board” (16). In this case, open peer review does not have to be digital. However, the concept has garnered much more attention in the digital age because new technologies make open review more feasible to accomplish. Significantly, he suggests that this is review by the “scholarly community” at large, but I will argue below that it can occasionally be beneficial to open up scholarly processes to the public, because the collective intelligence of the public can potentially improve the quality of one’s scholarship. By public, I mean anyone willing to read and comment on a piece. It might seem idealistic to make such a suggestion, and indeed I will complicate this notion below; but, when we (as humanities scholars) begin trying to shut out people who we do not believe can contribute valid knowledge to our work, we are still functioning as gatekeepers in a way that makes open review vulnerable to the bias and subjectivity for which blind review is criticized.

Returning to Shatz’s definition but going beyond it, there are some additional points to be made about open review using commenting functions. The process differs from traditional vetting in the following ways: the author knows who is commenting on her work (i.e., readers are identifiable as opposed to anonymous); the author can respond to those comments; the author receives knowledge from more than two reviewers; and she is typically able to make changes to the work based on these multiple reviews. The general nature of open review comments is that readers suggest revisions or provide further resources on a topic. Readers will also occasionally delve into conversations—even arguments—with the author or other readers about a given section of the text at hand. Typically, commenting is open to the public, but some sort of verification is usually required, such as one’s name, email address, and so forth. Some journals and book-length open review experiments require users to indicate an institutional affiliation or to even go through a verification process to ensure they are scholars.

Problems with Open Peer Review

It is important to consider the inherent problems in open peer review before determining the ways it can be used beneficially. As opposed to setting up a binary of good/bad peer review methods, which would obscure the many intricacies involved, I am instead suggesting concerns that need to be thought through before a humanities publication or author chooses to adopt an open review approach.

One important concern with open peer review is when it should occur. Should open peer review occur before, during, or after anonymous/blind review? With regard to conducting the processes simultaneously, this could work as long as the authors are allowed time to revise using all comments. Authors might choose to value the anonymous readers’ input more, though, if forced to deal with them all at the same time because the anonymous readers are going to be considered the “experts” by the editors. Thus, this approach could diminish the importance of comments from open review readers. Conducting open review first, on the other hand, could be a more fruitful option, in the sense that it could lead to more thorough revisions by the author based on open reader feedback. In June 2006, Nature launched a trial open review, which has been described as a failure by many scholars, in part because the journal chose not to adopt the process after trying it. Yet, Kathleen Fitzpatrick has pointed out in Planned Obsolescence: Publishing, Technology, and the Future of the Academy (2011) that there may have been problems from the start with the Nature experiment. For one, she notes, “open peer review took place at the same time as anonymous review, rather than as a preliminary phase, preventing authors from putting the public comments they received to use in revision” (27). Fitzpatrick’s reading indirectly suggests the benefits of running open review prior to anonymous review. If the goal is to improve knowledge by drawing upon multiple individuals’ expertise, as Levy suggests, both anonymous and open peer review should benefit the author’s work. If open review were to run after anonymous review, on the other hand, the anonymous reviewers might already have rejected pieces that could have been improved by open reader commentary; thus, unless open review readers are allowed to have a say in the publication decision after the anonymous reviewers’ decisions, this method is less likely to be helpful because it rejects potentially good scholarship.

This brings up the next concern: the reader’s input in the publication decision. Open review readers can comment before or after a piece is published. If open readers comment before a piece is published, their roles in the publication decision could include the following: readers’ comments could be considered by the editor or by the editor and anonymous reviewers before the publication decision is made; or, readers could be encouraged to submit a publication decision vote to the editor, which could count as a percentage of the overall ratings by the reviewers (oftentimes, reviewers are split in their decisions and editors have to make the final decision, anyway). If open readers are only allowed to comment after a piece is published, then the main concern would be whether or not their comments could potentially cause a piece to be removed from a site (essentially un-publishing the piece). In the Nature experiment, for example, while the editors read all the comments, it had been decided beforehand that only anonymous reviews would be considered in the publication decision (Fitzpatrick 27). This decision seemed to make open review comments inferior to the anonymous review comments, which means that open review readers will be less likely to take their own comments seriously—or even to comment at all—defeating the purpose of open review.

However, as open review is relatively accessible to the public, should a random reader’s comments count toward an editor’s publication decision? Many current experiments do not allow this to happen because certain readers might not be in the same discipline as the writer. While the readers might have valuable knowledge to contribute, the anonymous peer reviewers assigned to a potential article typically are chosen because of their expertise in a subspecialty of their field. Allowing non-academic or non-expert readers to play a role in the publication decision might result in something being published that is not up-to-date in regard to current scholarship. However, this does not mean that the public cannot provide valuable contributions. The theory of collective intelligence would suggest that anyone has the potential to contribute valuable knowledge, but this idea does not take into account the different ways knowledge is formed, protected, and discussed in various disciplines. The best approach might be for editors and publishers to find a balance in which anonymous reviewers, identifiable open readers, and ultimately the editors all have a say in the publication decision. If editors have the final decision, then they can filter out comments from the public—and from scholars—that might be incorrect or not useful or which do not follow standard disciplinary practices. Yet, the editors may still allow open readers who are and are not experts in the field to contribute (or distribute, in Levy’s terms) intelligence to the project.

PLOS One, an open-access, online science journal, poses a unique model. The open reader can actually make “minor corrections” or “formal corrections” by adding comments. The staff will review these comments and determine if the changes should be made (“Guidelines for Comments and Corrections”). Thus, the staff does have control over changes being made to an article, but open readers have a good amount of control as well. PLOS One serves as an interesting model because anonymous/blind review is still used, but open review readers’ comments can still play a major role in the ultimate quality of a given article.

Another consideration for an open reader’s role is the potential of rating other readers’ comments. The quality of one reader’s review could obviously be better than another’s; thus, if readers and authors could rate reader commentary, then readers with a higher level of trustworthiness could have more weight in the publication decision for an article or in any changes that need to be made to an article. Fitzpatrick, for instance, brings up an online forum for software developers called Advogato, which uses computational “trust metrics” to evaluate users of the forums based on their interconnections with other users (36). It is beyond the scope of this article to explore all possibilities, but ratings of open reader reviews and of readers themselves could indeed promote more detailed comments and provide editors with the knowledge of whom they can trust in a publication decision. In an even more ideal situation, extensive open reader comments could be considered scholarly work, or research, that could be counted towards tenure and promotion, as opposed to being considered “service.”

Ultimately, the timing of open peer review and the level of reader responsibility in the publication decision constitute decisions that individual publications will have to make, ensuring that they balance their overall mission with the changing needs of authors and readers. Yet, even if journal or book editors do decide to implement an open peer review process of one form or another, we are left with the question of incentive. What will incentivize the public—or even other academics—to comment on submitted work?

First, authors and publishers who want to seek a wide range of readers should tap into communities that are interested in the topics addressed by the text at hand. Existing online communities with common interests are naturally going to want to contribute to the work. Authors should strive to be active members of these communities to promote the goodwill that might later bring about faithful readers. For instance, when Noah Wardrip-Fruin published his "Expressive Processing: Digital Fictions, Computer Games, and Software Studies" (2009) online for reader commentary (as an early open review process), he already had a fan base on his blog. He tapped into that community (a community of academics and non-academics) the most. In his afterword, he comments on other similar projects by authors Siva Vaidhyanathan and McKenzie Wark, claiming that one of the inherent problems with their processes was that they tried to build communities around their work from scratch via publicity, which failed to garner as much useful traffic to their projects. Thus, incentive, or motivation, could potentially occur organically if the author seeks reader comments from those interested in his or her topic.

Even more likely, if contributing to others’ works becomes part of the requirements before one could publish in a given journal, for instance, scholars could be incentivized by their own desire to publish. There are projects attempting to address this concern, and those of us in the humanities who have something at stake in this issue can look to these developments for possible solutions to the incentive problem. In Media Res, one of Fitzpatrick’s projects, “asks five scholars a week to comment briefly on some up-to-the-minute media text” (Fitzpatrick 9).

According to the In Media Res website, each weekday, “a different scholar curates a 30-second to 3-minute video clip/visual image slideshow accompanied by a 300-350-word impressionistic response” (“About In Media Res”). All curators of the week must agree to comment on one another’s work that week. This is one small attempt at ensuring some level of participation. Essentially, the incentive is publication, since acceptance of your piece implies that you will comment on the other pieces of the week. It is important to note that this process is not deemed peer review, per se, by the In Media Res group, but their process offers a possible solution to the incentive problem for open review. To take this a step further, publications could refuse to accept manuscripts from scholars who have not yet become active commenters on their online publication’s articles, encouraging these scholars to begin providing feedback on the site. Ultimately, though, these incentives are only going to serve scholars. Plus, processes like this are going to require a change in the way scholars think about their work and in the way universities evaluate scholarly work for tenure and promotion. Changes like these do not happen quickly in academia.

As mentioned, some open review projects require identity verification, which adds another layer of complexity to the issue. Open reviewers should not be anonymous. This would make the process too similar to blind review. It would be very easy for anonymous commenters to write rude and demeaning comments or to spam the comments section if their identity could be hidden. However, some verification processes require contributors to be scholars and have, for example, a valid university email address. This leaves out members of the public who might have valuable contributions. Collective intelligence implies the opening up of the review process to anyone, and verification procedures limit these possibilities; however, spam, rude comments, and incorrect advice all have the potential to dismantle an effective process. Therefore, publishers need to consider how they will verify a contributor’s identity and how open they want to be with their contribution options. Perhaps allowing anyone who provides their name and email address to comment would be a useful approach, with editors having the ability to post or delete comments. Fitzpatrick, whose Planned Obsolescence was posted online for open review before it was revised for print, controlled comments on her site, but only removed spam or what the author deemed an inappropriate comment (although it is unclear what she considered to be an inappropriate comment).

Benefits of Open Peer Review

The above issues are legitimate concerns that need to be addressed if an editor or author is interested in adopting open review. These complications can deter publishers from experimenting with open review, and they can also deter scholars from wanting to participate in such a process. However, there are many instances in which open peer review can be effective. It does not seem likely that this process would replace traditional review processes altogether, but there are times in which it could be used to great benefit.

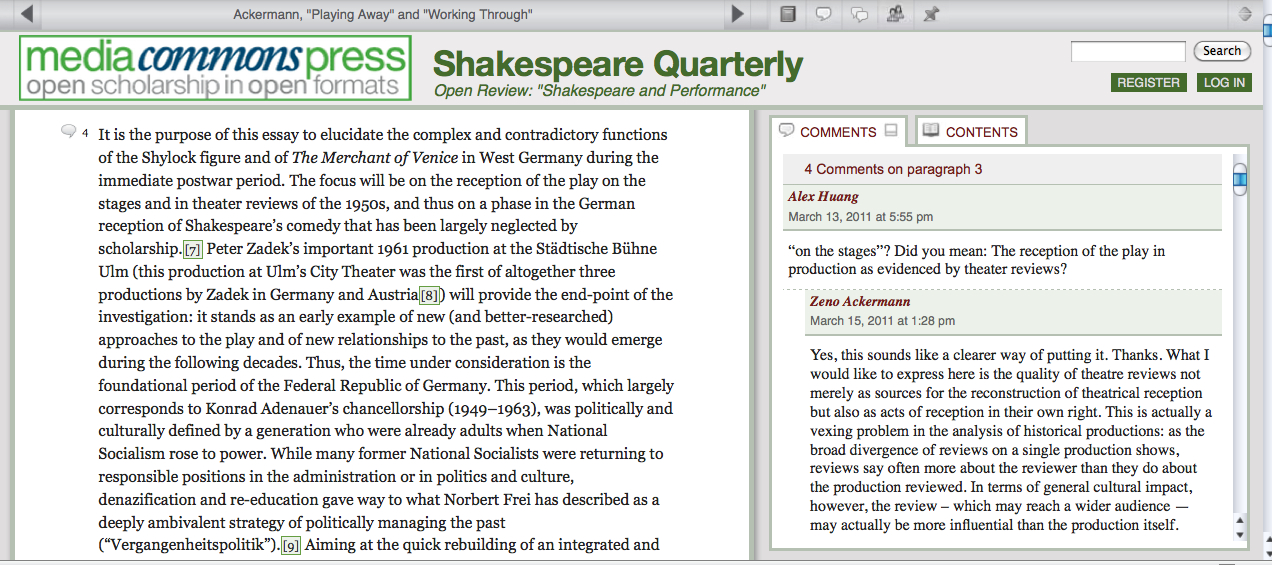

One benefit, which I have already implied above, is that the quality of an author’s article or book can be improved by multiple reader comments. In addition, identifiable readers’ comments can often be more thorough and helpful than those of anonymous reviewers. As Levy notes, online communities can work together to pool their knowledge towards a common goal. A solid example is that of Shakespeare Quarterly (SQ), which attempted an open review process for its fall 2010 issue. SQ used CommentPress, which is an “open source theme and plugin for WordPress that allows readers to comment on an author's work-in-progress as displayed in a blog or webtext setting” (“Welcome”). Comments could be made on the document as a whole or within individual paragraphs.

Their open review was a partially open process. Scholars were given the choice to post articles in Media Commons (a digital scholarly network) for comments and feedback, but the editors made the final decision about publication. Reviews by the public were made before the articles were sent out for blind peer review, so the open reader comments enabled the authors to merge different knowledge in order to (possibly) improve the quality of the articles.

During SQ’s open review process, four essays that had not yet been accepted for publication were posted, along with three review essays. In the end, 41 people made more than 350 comments, many of which incited changes by the authors (Cohen). Some of the participants were invited scholars, but anyone willing to publish their thoughts under their name could comment during the two-month review. Significantly, all seven texts that were posted to the Media Commons site were accepted for the fall 2010 print publication of SQ (Howard).

While there is no clear way to know if opening these articles up to the public through open peer review is indeed what made them worthy of final publication, the authors who participated have made various comments indicating this was the case. Patricia Cohen cites participant Alan Galey as saying that he was “‘entirely won over by the open peer review model.’ The comments were more extensive and more insightful, he said, than he otherwise would have received on his essay.” Ayanna Thompson, another contributor, states that the “lack of anonymity encouraged reviewers to engage with the material in a much more thoughtful and thorough way than in blind reviews because their name was attached to the comments” (Howard). These participants’ comments indicate that open reviewers’ responses were actually more effective and thorough than these authors had experienced in the past with blind reviews in other journals.

Similarly, discussing his review process, Wardrip-Fruin stated, “[the public reviewers’] comments contributed a huge amount to improving the manuscript and my understanding of the field. Further, they contributed things that it would have been nearly impossible to get from press-solicited reviews.” He claims that the social process these comments went through made him trust his commenters’ suggestions more than he trusted the press-solicited reviews. Both the SQ and Expressive Processing experiments point to some of the most striking benefits of open peer review: open reader commentary can help the author improve her knowledge of the field; readers’ comments are often much more in-depth than traditional anonymous reviews; and ultimately, readers’ evaluations can help improve the overall quality of the text at hand. While this has been the goal of traditional peer review since its inception, the idiosyncrasies of two anonymous/blind reviewers do not always improve a manuscript.

Another benefit is that members of the public can contribute knowledge that their own disciplinary expertise brings to the table—knowledge that the author might never have received from two blind reviewers within her field of study. Shatz argues that “[n]on experts are…a bulwark against conservatism, as they are not wedded to the assumptions of the specialty” (155). In other words, non-academics or non-experts who contribute reviews to a text can bring a fresh perspective. Levy’s notion of universally distributed intelligence is brought to mind here, as the vastness of the Internet allows for individuals from many different places and with many different specialties to bring about a more cohesive, strong intelligence.

A final benefit of open review is that it could actually encourage authors to write better quality texts when they first submit them. While Shatz claims that “[t]he present hierarchical system of journals and presses provides incentives for people to do their best work,” and “if there were no peer review system, or standards were relaxed, the quality of the work would fall off considerably” (145), Fitzpatrick offers an alternative perspective. She cites the editors of Atmospheric Chemistry and Physics, whose statistics confirm that collaborative peer review increased the quality of their journal (which they based on the journal’s high rank in their field—12th out of 169). They state that “public peer review and interactive discussion deter authors from submitting low quality manuscripts,” indicating another possible benefit of open peer review, which is that the potential for a larger, public audience might increase an author’s desire to prepare a strong, well-researched article (27). While one would hope that authors would try their best no matter what venue they choose for peer review, it does seem likely that making one’s work available to a very large group of people earlier on in the process could inspire additional care.

Similar to this notion, reviewers might indeed take more care with their comments in an open commenting setting because their reviews have their names attached, and, therefore, their reputation and expertise may be judged by other readers. Wardrip-Fruin appreciated the open review process because it allowed for “review of the reviews,” meaning that many reviewers weighed in on each others’ perspectives. In addition, there is a level of quality control inherent in this process that is not necessarily available in blind review. Readers are going to be held accountable for their responses more so than in blind review because the author and other readers know who they are and can easily disagree with their comments. Also, much like in the writing classroom’s version of peer review, when multiple people point out the same issue with a work, this often indicates to the author that a change does indeed need to be made. If only one blind reviewer suggests a change, the author is left to wonder whether this change is necessary, a personal preference of the reviewer, or perhaps even an incorrect observation.

When Open Peer Review Can Work

The sciences often use open peer review, but this model is only beginning to gain ground in the humanities, and the differences between how the two disciplines perform peer review remain divisive. This could be because knowledge in the humanities means something different than it does in these other fields. As Shatz notes, scientists see their research to be “related to truth in a way that humanists do not. In the scientist’s perception, the state of human knowledge will be poorer in concrete ways if there is a wrong decision about publication or funding” (5). In the humanities, if “a paper goes unpublished… ‘truth’ isn’t lost to the world” (6). Thus, the sciences might benefit from open peer review more than the humanities because open peer review allows for quick dissemination of knowledge and feedback from many people, meaning that if there is a true error in an experiment, for example, this could be sorted out better if more individuals are reading the article and if the error is brought to light very quickly. However, with the high rejection rates and slow turnaround rates common in many humanities publications (which can delay both tenure and promotion), these publications too could benefit from open review.

In order for scholars to pool their knowledge together towards a common goal, as Levy suggests, open review invites a larger audience, consequently inviting more knowledge. Thus, the first step in open peer review is to determine the verification process—in other words, to determine the “public” for one’s publication. Verification requirements should be as limited as possible—perhaps only name and email address—but editors should reserve the right to remove inappropriate comments. This will allow for the widest “virtual network” from which to be drawn. Open peer review will likely work best if conducted prior to blind review so that authors can use the distributed intelligence of their readers to improve their writing before it goes through blind review. This way, authors will also receive feedback from the public and from specialists (blind reviewers), and they can consider all the feedback important since the public’s feedback will influence the quality of the article presented to the blind reviewers; the reviewers, in turn, will likely require their feedback to be addressed in order to recommend publication. In order to garner participants for this process, readers’ comments might need to play into the publication decision. In addition, as many scholars have noted, there should be some sort of professional incentive for participating in such an activity, and participation should perhaps count as a form of scholarly activity. Bonnie Wheeler says of blind review in the humanities, “Most scholars don’t list pieces or journals for whom they have provided anonymous peer review on their CVs, especially if they are reluctant to ‘out’ themselves. Furthermore, I’ve received several complaints…over the past few years about the increasing unwillingness of specialists to provide peer review, precisely because it is an ‘unrewarded activity’” (314). Open peer review, similarly, has the potential to go unrewarded, but because of the lack of anonymity, it also has more potential for counting towards scholarly activity. With anonymous/blind review, publishers could offer recommendation letters to accompany tenure portfolios for reviewers who contribute regularly and effectively to their publication. For online, open peer review, in particular, open review commenters could argue for the effectiveness of their comments if online publications employed commenter rankings.

Ultimately, journal editors and book publishers in the humanities should consider online open peer review as a way to produce collective intelligence. This is not to say that blind peer review should be eliminated; the traditional process has its benefits. For example, anonymity can be seen as protecting authors and reviewers. Moreover, in our present historical moment, blind review still has too strong a grasp on tenure and promotion. Scholars, rightly, are not going to want to risk publishing in a journal that is not peer-reviewed by traditional standards because their publication might not be viewed as valid. In addition, publishers are right to be nervous about moving to open peer review because their journals might lose quality submissions or status. The challenge for the future will be finding a balance that provides enough incentive and low enough barriers for engagement, so that many people will engage in humanities scholarship, collectively pooling together their knowledge toward the betterment of a text’s overall quality.

Works Cited

“About In Media Res.” In Media Res. Media Commons, n.d. Web. 10 Apr. 2012.

“About the Journal.” Journal of Interactive Technology and Pedagogy. CUNY, n.d. Web. 10 Apr. 2012.

Cohen, Patricia. “Scholars Test Web Alternative to Peer Review.” The New York Times Online. New York Times, 23 Aug. 2010. Web. 20 Feb. 2012.

Fitzpatrick, Kathleen. Planned Obsolescence: Publishing, Technology, and the Future of the Academy. New York: New York UP, 2011. Print.

Godlee, Fiona. “The Ethics of Peer Review.” Ethical Issues in Biomedical Publication. Eds. Anne Hudson Jones and Faith McLellan. Baltimore: Johns Hopkins UP, 2000. Print.

“Guidelines for Comments and Corrections.” Plosone.org. n.d. Web. 11 Jan. 2013.

Howard, Jennifer. “Lead Humanities Journal Debuts Open Peer Review, and Likes It.” The Chronicle of Higher Education. The Chronicle of Higher Education, 26 Jul. 2010. Web. 15 Feb. 2012.

Jenkins, Henry, et al. “Confronting the Challenges of Participatory Culture: Media Education for the 21st Century.” Digitallearning.macfound.org. MacArthur Foundation White Paper Series, 2006. Web. 10 Dec. 2010.

Katz, Stan. “Open Peer Review in Humanities Journals?” The Chronicle of Higher Education. The Chronicle of Higher Education, 27 Jul. 2010. Web. 15 Nov. 2011.

Levy, Pierre. Collective Intelligence: Mankind’s Emerging World in Cyberspace. New York: Plenum Trade, 1997. Print.

Mahoney, Michael. “Publication Prejudices: An Experimental Study of Confirmatory Bias in the Peer Review System.” Cognitive Therapy and Research 1.2 (1997): 161-175. Print.

Shatz, David. Peer Review: A Critical Inquiry. Lanham, MD: Rowman & Littlefield, 2004. Print.

Vershbow, Ben. “Expressive Processing: An Experiment in Blog-based Peer Review.” Futureofthebook. Institute for the Future of the Book, 22 Jan. 2008. Web. 10 Dec. 2011.

Wardrip-Fruin, Noah. “Blog-based Peer Review: Four Surprises.” GrandTextAuto.org. N.p., 12 May 2009. Web. 10 Apr. 2012.

“Welcome.” Futureofthebook.org/commentpress. CommentPress, 2012. Web. 10 Apr. 2012.

Wheeler, Bonnie. “The Ontology of the Scholarly Journal and the Place of Peer Review.” Journal of Scholarly Publishing 42.3 (2011): 307-322. Print.

Images:

All images are screenshots of the online texts and websites discussed in this note

1. Wardrip-Fruin, Noah. “Expressive Processing: An Experiment in Blog-Based Peer Review.” GrandTextAuto.org. n.p. 22 Jan. 2008. Screenshot. 10 Apr. 2012.

2. “Italian Television and Media Convergence [April 16 - 20, 2012].” In Media Res. Media Commons. n.d. Screenshot. 9 Apr. 2012.

3. Ackerman, Zeno. “Playing Away and Working Through: The Merchant of Venice in West Germany, 1945-1960.” Media Commons. Shakespeare Quarterly, n.d. Screenshot. 9 Apr. 2012.